How do you evaluate a mobile game's profit potential?

Aleksandr Enin of My.Com's IT Territory Game Studio takes a deep dive on determining which fledgling games can be salvaged and which need to have the plug pulled

Dissecting Success

I've been working at My.Com for the past 13 years. This is a very big company comprised of more than 10 different studios making mostly mobile games. The company releases 3-4 games a year on average. This type of workflow understandably demands huge expertise when it comes to the quick and accurate evaluation of projects at every step of their development.

The biggest challenge in this process comes with projects showing mixed results. While it's quite clear what to do with weak or strong projects, these projects generate problems as you shift from evaluating stats to evaluating growth potential.

For instance, if a casual project has a Day 1 retention of 20%, then it's definitely a weak project that needs to be ended. But what if it's a midcore game with a Day 1 retention of 30%? Not so simple now, is it?

Of course, you can always shut down this midcore game, but then you risk ending a hit. Its potential may not have been explored yet and it is possible that developers need another year for this ugly duckling to transform into a swan.

"Experts who can correctly evaluate the potential of one game after another simply do not exist"

But if you do give developers this extra year and there's still no swan in the end, the company ends up losing money and opportunity since these same developers could have been working on another game which, maybe, could have been that swan.

To find your way around this conundrum, what you need to do is learn to evaluate the potential as opposed to the current state of a project. But how can you calculate potential using industry standards? Unfortunately, you can't. Industry standards are too vague and universal to look into the soul of a specific project. A magnifying glass isn't enough to see the potential; you need an industrial microscope to do that.

In my experience, all decisions involving these ambiguous projects are made with the help of intuition, a credit of trust, or endless soft launches. This makes the process costly, unpredictable, and sometimes even painful.

So today I would like to talk about this microscope and what it's been like using it in my experience. Maybe this information will help some talented developer make the right decision at the right moment and result in the birth of an excellent game.

Individual Potential is Overestimated

Let's start our discussion by questioning what we currently know about assessing a project's potential and predicting success of one game or another. We all have our own opinion about these things, naturally, but this is most likely wrong as it is based on subjective points of view regarding projects, making it hostage to all sorts of cognitive distortions.

We here at My.Com have had to go through a long journey to understand that experts who can correctly evaluate the potential of one game after another simply do not exist. Evidently you will find situations where a game's success draws multiple experts to say they correctly wager that it will do well and have been vocal about it. But if you study the history of their predictions, you will see a large proportion of their forecasts were simply off target...

So how do you solve this? My solution was inspired by our own track record.

My studio is part of the My.Com group of companies, which means I, like all of our other developers, have access to the statistics of all our partners' studios, and not just my own. So I had access to dozens of various projects, and I picked the ones that filled my criteria of success and started crunching data to find common elements that would reveal a formula.

What Counts as a Success?

In order to continue this discussion, we need to agree on the criteria which make a game successful. According to my system, these games should make at least $1 million after App Store and Google Play fees, and have a margin of more than 30% one year after global launch.

My.Com releases quite a few of these games on a regular basis, therefore I had enough data to go on and draw my first conclusions pretty soon. I have broken them down into two postulates:

1. There are no successful games without successful marketing

2. There is no successful marketing without a successful game

That's right, our internal product research has shown that successful products always have a history of success for both the game and its marketing. Despite the fact that these factors are not in direct correlation, it's where their lines cross that we find the necessary level of success.

This is why the next strong point that I would like to note is that it is futile to compare projects using game metrics without combining them with marketing metrics. It's the same as talking about an object's color by looking only at its shadow.

So now we need to further define criteria for success from two separate perspectives: marketing and game metrics.

Evaluating a Product's Success - Marketing

After studying the marketing of our greatest games, it quickly became evident that the key to their success is in the project's ability to attract many cheap registrations. Revenue targets were reached through these registrations, and after further optimization, we reached the required margins.

To describe the effect that allows a project to attract a lot of traffic, we need to look at the Install Rate (IR) of advertising. In my opinion, it is the parameter to look at when considering a project's marketing success, and it is directly tied to Click-Through Rate (CTR) and Conversion Rate (CR). So the calculation for determining one's Install Rate is IR = CTR * CR.

"It is futile to compare projects using game metrics without combining them with marketing metrics"

In order to achieve a high IR you need to have a high click-through rate on your creative elements and a high store page conversion rate. And you can achieve this only in the case where both your creative elements and your store page carry the same message. This will captivate the audience and form the correct expectations from the product. This is a must to retain the attracted user. If your message is captivating but it creates false expectations, the players will leave quickly and the costs of luring them in won't be compensated.

In my experience, the first sign of looming success is high CTR on gameplay video creative content (>1%). The store page's next goal is to support and expand the message of the video content, and if all is done right, you get a CR of 30-40%, which combine for an overall IR of 0.4-0.5. These are signature values of a project's successful marketing, and it means the project should be developed despite any other problems it may have.

How can the Install Rate be affected?

Because the IR is a reflection of the audience's reaction, it should be considered an external factor as it can only be calculated and not controlled. This is the result of the producer's work during pre-production when the game's concept was approved along with its setting and time of release. Greenlighting a project therefore can become the producer's blessing or curse.

Evidently, I can only say this so confidently if the following clauses have been met:

● The project's positioning has been executed correctly and advertising assets were produced at a decent quality level without any major mistakes.

● We are looking at the longer term. In the short term, you can give your IR a nitro boost from time to time with a great advertising asset or two, but those burn out quickly and the project descends back to its baseline. In the long run, all we can really do with the index is to slow down its drop.

When should we measure the Install Rate?

Right off the bat! Pretty much as soon as you have an understanding of the game's concept and setting. You don't even have to wait for the right conditions to check the index through positive ROI and target retention. As I said earlier, the first sign of a successful game is a high CTR on gameplay videos and you don't need to wait for the soft launch to measure it. In some cases you don't even need to wait for the project's development to kick off.

To do that, you need to carry out your video ads' UA in video networks, as their target audiences are broad, so your results will be as unbiased as they can get. The results allow for an objective comparison of various projects.

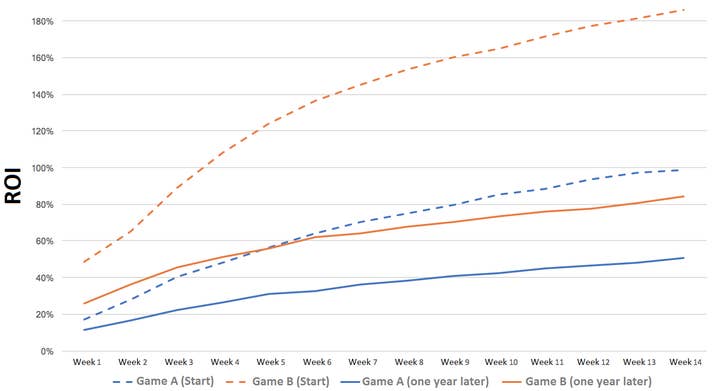

It is also very important to bear in mind the life cycle of the projects you are comparing. If they are different ages, you will end up drawing the wrong conclusions. Ignoring the life cycle is a grave mistake that even professionals tend to make. Here's an example of how the ROI for two of our hit projects' marketing campaigns changed just one year after launch.

This sharp contrast in ROI illustrates how the target audience you engage from the start is very cheap and pays well. But there comes a time when it runs its course, and returns dry up despite the past year's tweaks. So when you're comparing two projects, always mind their life cycles.

Evaluating a Product's Success - Game Metrics

As I indicated at the beginning of the article, marketing metrics are just one of the main pieces of the puzzle that is a project's potential. The other one would be gaming metrics.

Unlike marketing IR, gaming metrics are very hard to compare. Over the 13 years I've been at the company we've had plenty of "Holy Wars" in an attempt to narrow it down to one concept of evaluating a game's quality. None of these attempts were successful since they would always crash into counter-arguments about different genres.

All of our products are called games, but in truth they are all very different products that are united only by their entertaining nature. All games entertain players, but every genre has its specifics. This means that it is only correct to compare games of the same genre. But if you look at the genres of mobile games on a timeline, you will see that they evolve perpetually and you get all sorts of explosive combinations when genres mix and give birth to new genres and subgenres.

"Gaming metrics are very hard to compare... We've had plenty of 'Holy Wars' in an attempt to narrow it down to one concept of evaluating a game's quality"

This happens because of the nature of the mobile games market; it rewards the games that were the first to provide a unique experience that can be monetized in the long run. This makes games an extremely elastic and dynamic product where genre borders are blurred and breaking games down to gaming metrics is a challenge.

So when we speak about mobile games and forming the criteria to evaluate their game metrics, we find ourselves in an extremely chaotic environment which does not allow us to carry out comparisons based on mathematical calculations. The only thing we can do in these conditions is to carry out a rough comparison of game metrics in their extremes, which could split games into good and bad, but cannot provide any insight into a mixed feeling game's potential.

So what do we do? Is there a mathematically backed solution rather than one based on trusting this or that developer?

I think so.

We did not come to this understanding straight away in our studio. It wasn't discovered systemically. It was circumstantial, and for that we should thank Google Play. It is their market analysis that was the starting point for our own research, which in turn gave us the answer.

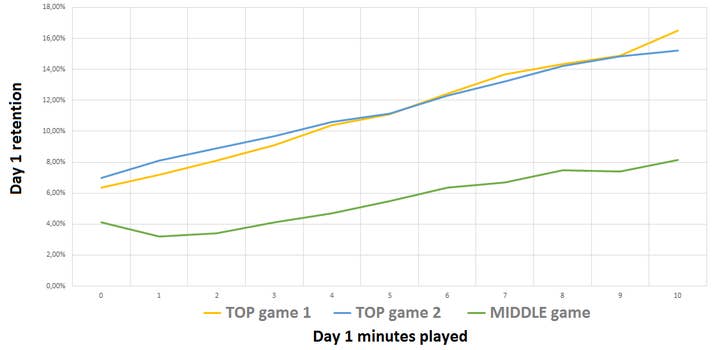

This happened in Brazil in 2017. Google Play held a conference there for its developers and shared all sorts of information with them. One of their presentation slides revealed four groups of projects whose success is measured by the retention curve in the first 10 minutes of the game.

At that moment in time we were obsessed with improving retention in our game Juggernaut Wars, so this research inspired us greatly. It suggested looking at retention for a new point of view, i.e. how high it is from the first minute of the game and moving into quality growth straight after that.

Google Play's data suggested that the stronger the starting point and growth in the first minutes, the higher the game's potential popularity. We used this concept for our own research and it worked!

We ended up having this chart with reflected retention of two of our hit games and one middle game and it correlated with their commercial success very accurately.

"Wow, you can actually assess a game's hit potential by its first 10 minutes," we thought.

To fully understand the power of that conclusion, think of this equation: just one minute spent playing a game provides a stable mathematical chance of re-visiting this game the following day. And if we're talking about a hit game, then one minute is enough to make a huge difference when it comes to retention for a game that isn't a definite hit. And what can a player really see over just one minute? Next to nothing. It will only mean glancing at the setting and base mechanics. They will see what is impossible to change without overhauling the whole project. You could say this is the project's DNA.

This is how the hypothesis that every project has DNA was born. It can be interpreted as the metric that gives you a mathematical representation of a game's potential.

A project's DNA is a set of fundamental aspects of a game that a player dives into in the first minutes of playing. This determines the chance of retention after the first minute as well as the marginal chance of growth with every consecutive minute in the game. The higher the starting value and the higher the marginal growth, the strong the DNA and its potential.

"If retention is not growing or even dropping, it indicates a fundamental problem. I call this problem a wormhole"

This microanalysis allows looking beyond genre boundaries and seeing a project completely raw. When we assess a player's will to continue playing after the first minute and measure how much more they're inclined to keep playing with every other minute, what we get is a player's subconscious reaction. This short timeframe provides very little to no data for a gamer to make a conscious decision. But it is made subconsciously. If a game's concept and its implementation are strong enough, this will keep the player engaged subconsciously and the longer they play the stronger its influence.

When is the right moment to assess the DNA?

This concept works once a project has at least 10 minutes of ready-for-release quality gameplay.

Once you get the game up and running well enough, its hit potential can theoretically be measured at the vertical slice stage. You can, in reality, measure it no earlier than at the closed alpha stage since you need a large volume of data to form a graph. This research is based on classic by-the-minute retention. In order to compile the data into a chart, you need representational groups of users for each of the 10 minutes. Our estimations suggest that at least 40,000 registrations are the required minimum. As soon as you can provide that volume of users for 10 minutes of ready-for-release quality gameplay, you will be to determine the project's DNA.

How to interpret the DNA?

Your result will be in the form of a curve with the base data only providing value when compared to other curves calculated the same way. When you're dealing with minute-by-minute retention, the task depends very much on the project. It's important to know how users log in and log out and how the server is reading this data. Your maximum value will be determined in comparing projects with similar server solutions.

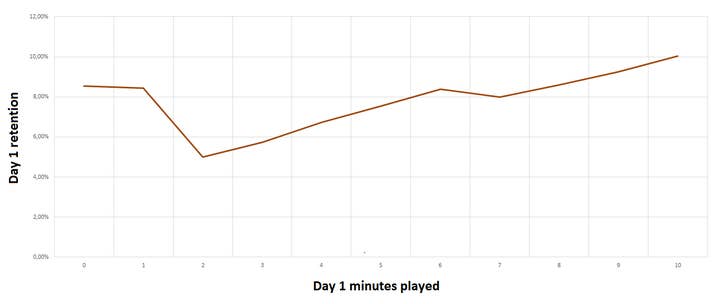

Even if you have nothing to compare with, you can still assess the project by looking at the growth dynamics. A successful game would have retention growing with every passing minute, so it means the more that players play, the more they like it. If retention is not growing or even dropping, it indicates a fundamental problem. I call this problem a wormhole. Here's an example of an evident wormhole in one of our games.

As you can see from the chart, retention growth over the first 10 minutes is very low. While our best-selling games see it at 250%, here it's just 20%. At the same time retention at the second minute is lower than after the first minute, and that means the game is not only failing to engage, it is losing its attracted audience. As long as a project has a wormhole, it cannot effectively acquire new players.

Making the call

Working at a large company which unites more than 10 different studios, each of which is constantly engaged in several projects, I had the opportunity to look at all of our positive experiences and study our success cases, assessing them through analysis and from my own experience.

My task was to learn to evaluate projects not only splitting them into strong or weak, but also by creating a method to assess mixed-feeling projects by analyzing their growth potential, which at times was not evident yet.

I hope my research helps talented developers make weighed decisions when dealing with complex issues as the launch or closing of a project. Because working on a game is not just effort but also time. While developers are always eager to put in the hard work, time is a very limited resource, so use it wisely. Good luck!