Ubisoft's "Minority Report of programming"

La Forge claims its Commit Assistant AI can cut programming time by 20 per cent by detecting bugs as they're introduced

Artificial intelligence can do a lot more than just keeping NPCs from acting like fools. During the Ubisoft Developers Conference in Montreal this week, Ubisoft gave a handful of journalists a look at some of the work the publisher is doing with AI and machine learning. Some of it had a very clear impact on the games people play, like teaching NPCs how to drive in Watch Dogs, or creating For Honor bots to help playtest combat mechanics for developers.

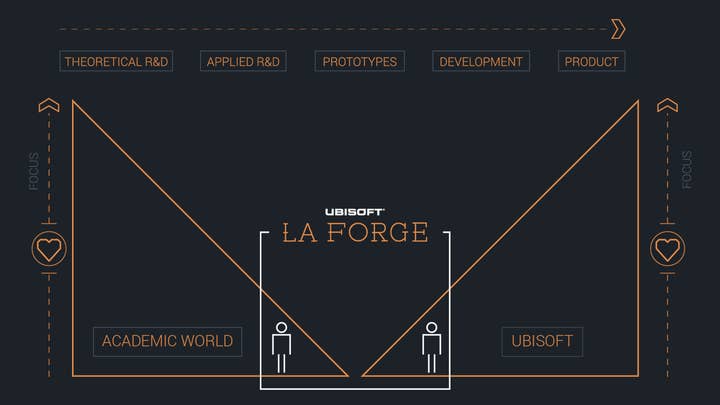

But perhaps the most significant AI/machine learning project they talked about would be functionally invisible to players. That project, Commit Assistant, was described by Ubisoft's Yves Jacquier as "the Minority Report of programming." Commit Assistant is the product of La Forge, the Ubisoft Montreal collaborative R&D division Jacquier heads, and it could do for programmers what spell-check did for writers.

When a programmer adds new code to a game, Commit Assistant is intended to flag parts of the code that are introducing bugs. It can do this because La Forge trained it using roughly a decade's worth of code changes and bug reports from Ubisoft games at every stage of development. It analyzes bugs and regressions that were added to code previously and creates a "signature," checking what parts of the program the new code is touching and estimating how likely that is to produce problems.

"When we first introduced mocap, it was perceived as a threat, like it would cut animators' jobs. It was the opposite."

So far the results are promising. Ubisoft claims it catches 6 out of 10 bugs accurately, and has a 30% false alarm rate, and as developers use it more and it re-trains itself, the expectation is that the system will flag bugs with higher confidence and reduce the false alarm rate. And it doesn't just identify potential bugs; it also tells devs what the apparent issue would be, and suggests a fix for it. Ubisoft has already rolled out Commit Assistant for the developers of Rainbow 6: Siege and an internal tech group working on traversal tools.

Jacquier said the system could save programmers 20 per cent of time. When asked how that figure was determined, Jacquier explained it was an estimate based on a recent test case.

"To make sure it worked before we put it in the hands of production, we froze the process and observed a couple of productions for three to six months, I believe," Jacquier said. "Just to see how they behave, what are the bugs they created. Based on their history, we simply ran a program saying how many of those bugs would we have caught in that time. By doing that and estimating false alarms, etc. we came to the assumption that it was about 20 per cent of the work that had been done by this team during this time."

He also stressed that the estimate only covered programmers' time. It didn't address time savings among the QA staff from the apparent 60 per cent of bugs they wouldn't need to spend time reproducing, documenting and reporting.

When asked how this might shorten development times, Jacquier said the value was more in freeing people up to do other things. He likened it to when Ubisoft first ramped up its motion capture efforts more than a decade ago.

"When we first introduced mocap, it was perceived as a threat, like it would cut animators' jobs," Jacquier said. "It was the opposite. The mocap was facilitating animation a lot. It was helping animators to focus on where they have real added value, which is the emotion, the spectacular aspect of the animation. We created more spectacular animation, we wanted more animation in games, which created more animators at the end of the day."

That might come as a comfort to many developers, as advances in AI are touching a multitude of areas within game development. Jacquier said that AI programs can already create assets for games that are about 80 per cent as good as what human developers can produce.

"I think it will take three years before you can have a fully generated NPC in a game"

"Today the AI can redo the job for very specific things, such as text-to-speech, as long as you don't put in emotion," Jacquier said. "Or animation, but only for locomotion. Facial animation, as long as you don't expect emotion on the face but mostly leaps, moving, etc."

Right now we need developers to take characters the rest of the way, but Jacquier believes that's a temporary state of affairs.

"I think it will take three years before you can have a fully generated NPC in a game," he said. "A character with its own animation, modelling, facial expression, text-to-speech with different emotions or variation. And that's totally comparable to something you're doing today with animators, people who are specialists in speech, modellers, etc."

Again, Jacquier doesn't see this costing jobs so much as enabling developers up to add more to their games. Still, he admits that's not his call to make; he's just making tools that will hopefully enable others to take gaming in new directions.

"What I think is AI is just a tool," Jacquier said. "It's always been a tool, and it will augment people's capabilities. Like any other tool, it takes time to understand what it does, and what it does not. And it has to be used the proper way."

The idea of using AI and machine learning "the proper way" calls attention to the idea that there is an improper way to use them. And that brings up all manner of sci-fi movie premises and dismaying tech articles.

"One thing that baffles me most of the time is when you talk about recent advancements in AI, people think SkyNet," Jacquier said. "People already think we're up to artificial general intelligence and robots taking over humanity. I don't think that's the right question. The right questions today are, 'What are companies doing with my data? What's the real usage of what I'm leaving online? How are those companies using that to create AI targeted ads, or to provide that to other companies?' And I think that's a real big deal in AI, and what we should all be more concerned about.

"For the rest, as long as we're very transparent in what AI can do and how we reach those results in terms of data usage, it's almost an ethical choice to let the human decide what to do with that. Just like we did 11 years ago with mocap; we left that in the hands of the animators because they know how to use that. And we think with the AI tools today, it's the exact same thing. What I'm expecting from our creators, it will free up more of their time to provide more exciting and enticing works and gaming experiences."

Disclosure: Ubisoft paid for our travel and accommodations for the event.